B2E INTERNAL TOOL

Build the best data cleaning process of the market research industry

COMPANY

ROLE

Product Designer

FIELD

Market Research

YEAR

2023/2025

Quick summary

For two years, I led Product Design for the Data Quality and Fraud Prevention team at Potloc, overseeing a vital aspect of the company’s business. Data integrity is a growing challenge; according to IBM, poor data cost U.S. organizations $3 trillion in 2016, a figure that has only increased over time.

Despite raised awareness, survey data fraud (spanning click farms, VPN manipulation, and even GenAI-generated responses) remains a serious and evolving threat.

14

Tech & human quality checks

0%

No more black-box supplierss

100%

Transparency

Automated data cleaning process

How often have you asked vendors to clean your sample or replace respondents after delivery? At Potloc, we prioritize data quality above all else. Our three-part approach selects the right survey sources, optimizes the respondent experience, and uses 14 quality checks to deliver only the most reliable insights.

Overview

Objective

Implementing rigorous quality checks before, during, and after survey completion, we aim to filter out fraudulent, inattentive, or disengaged respondents while maintaining transparency and trust. This approach not only protects data integrity but also positions Potloc as an industry leader in delivering reliable, actionable market research insights that clients can confidently use to drive decision-making.

Key benefits

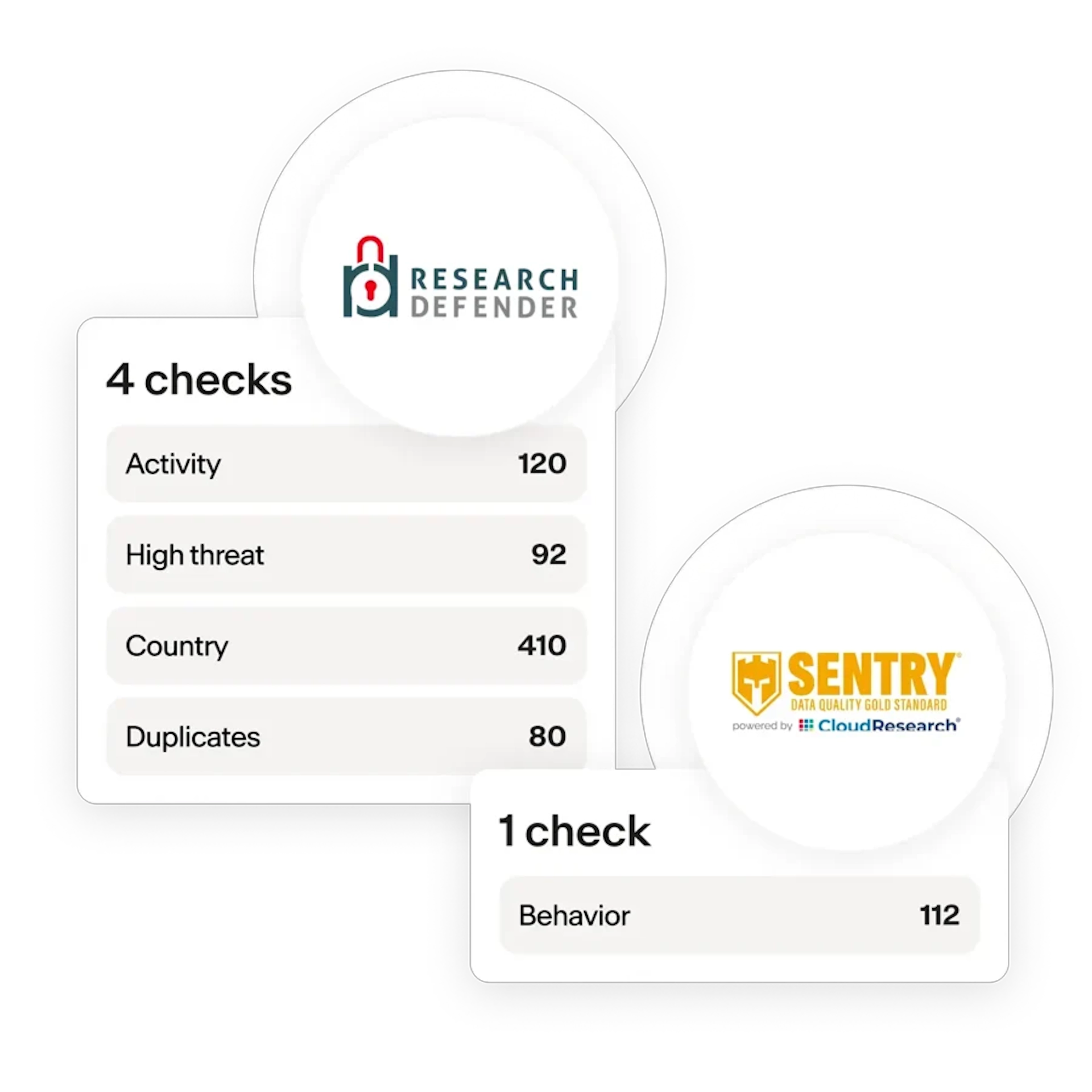

- Multi-layered quality checks ensure reliable, authentic insights for confident decision-making.

- 100% transparency into our data validation process—no black-box suppliers.

- 14 human and technical checks across all survey stages exceed industry standards.

- Proactive quality control eliminates costly sample replacements and protects research budgets.

Discovery

We analyzed data patterns and interviewed researchers, cleaning teams, and clients, revealing that quality issues occur throughout surveys. We learned that problematic respondents aren't just fraudsters; many are disengaged or rushing. Both types damage data quality, requiring removal at multiple touchpoints.

Having faced these challenges as a User Researcher myself, I understood the problem firsthand.

This discovery phase resulted in:

- Reporting through dashboards

- Interviews and shadowing

- Data Quality definition

- Risks & Issues workshop

Solution

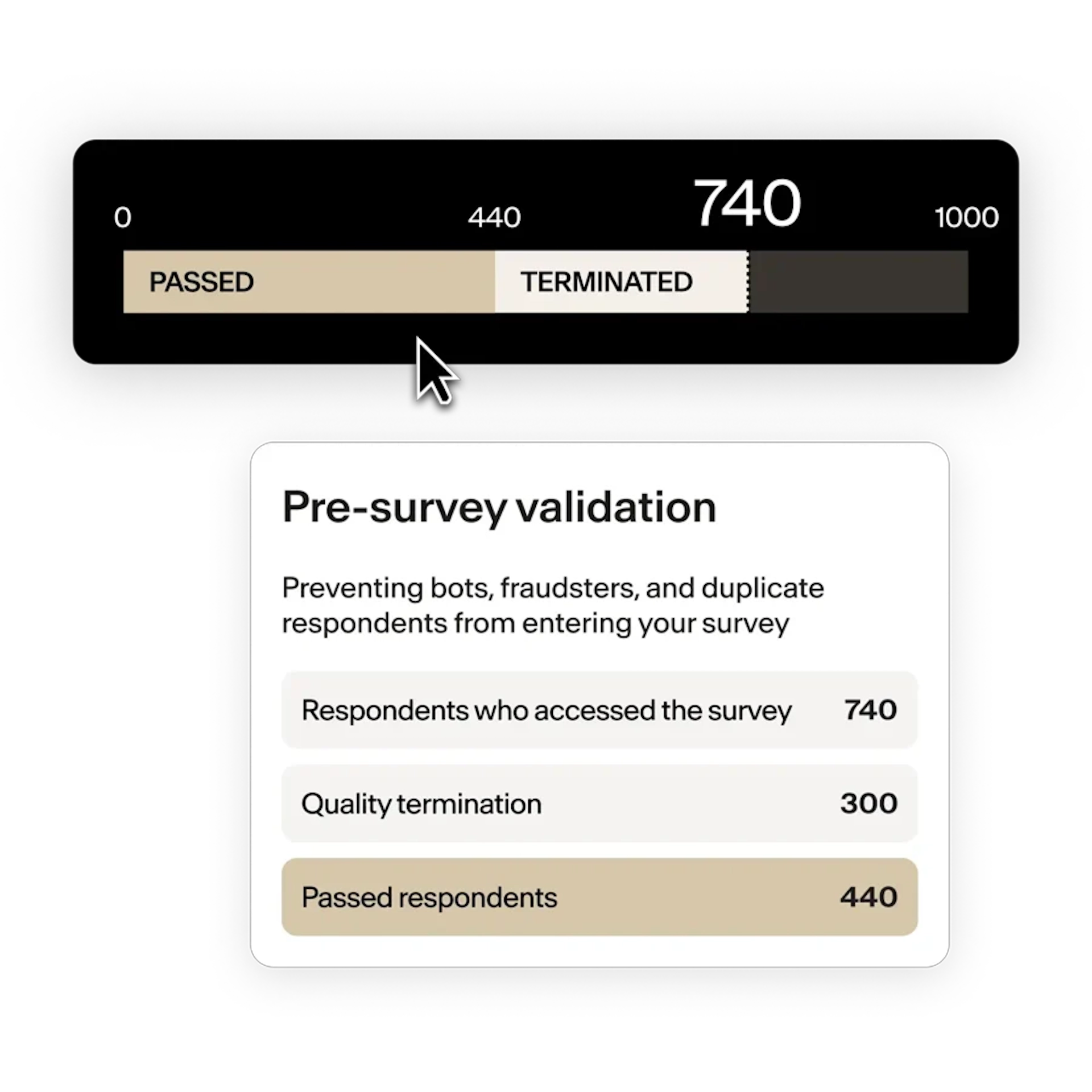

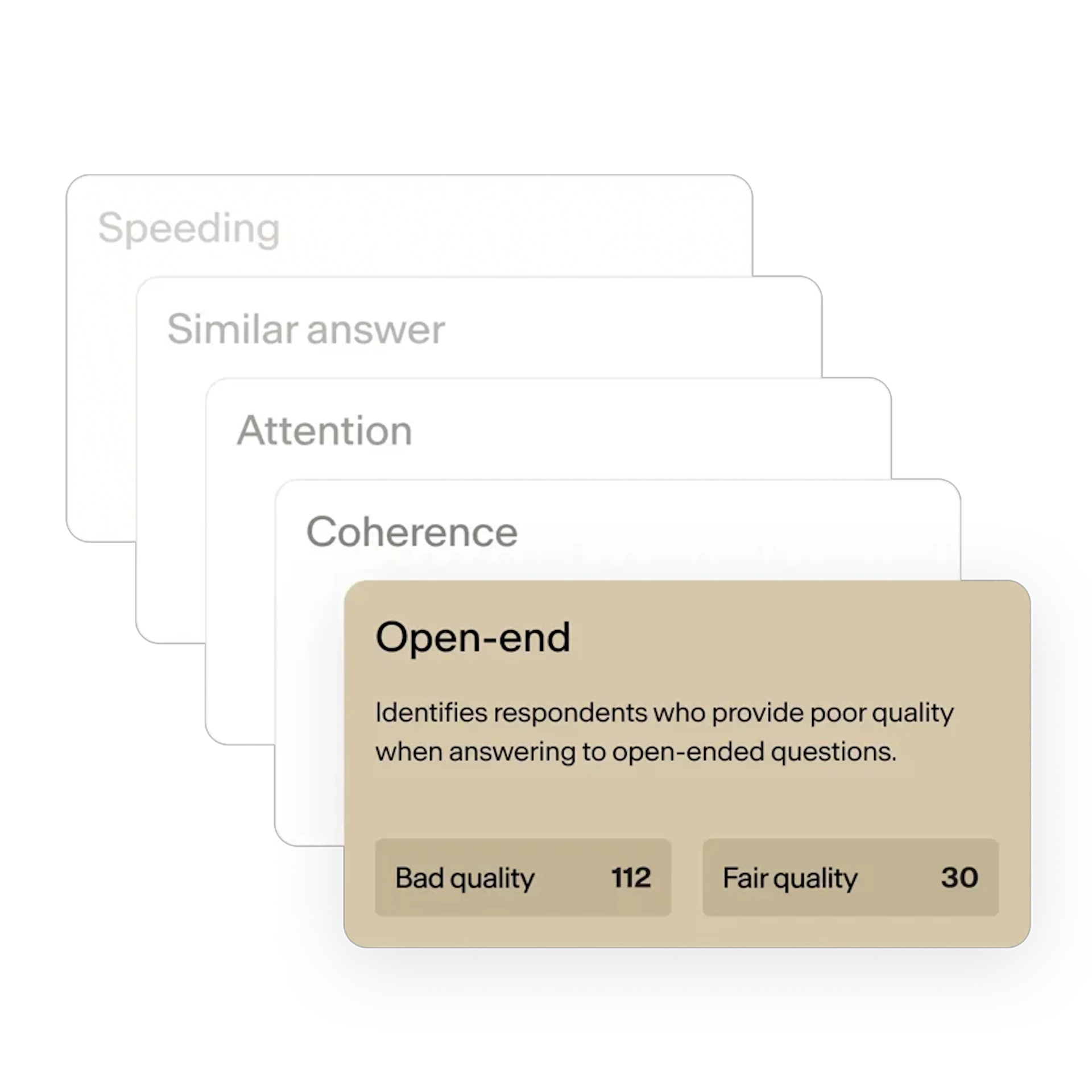

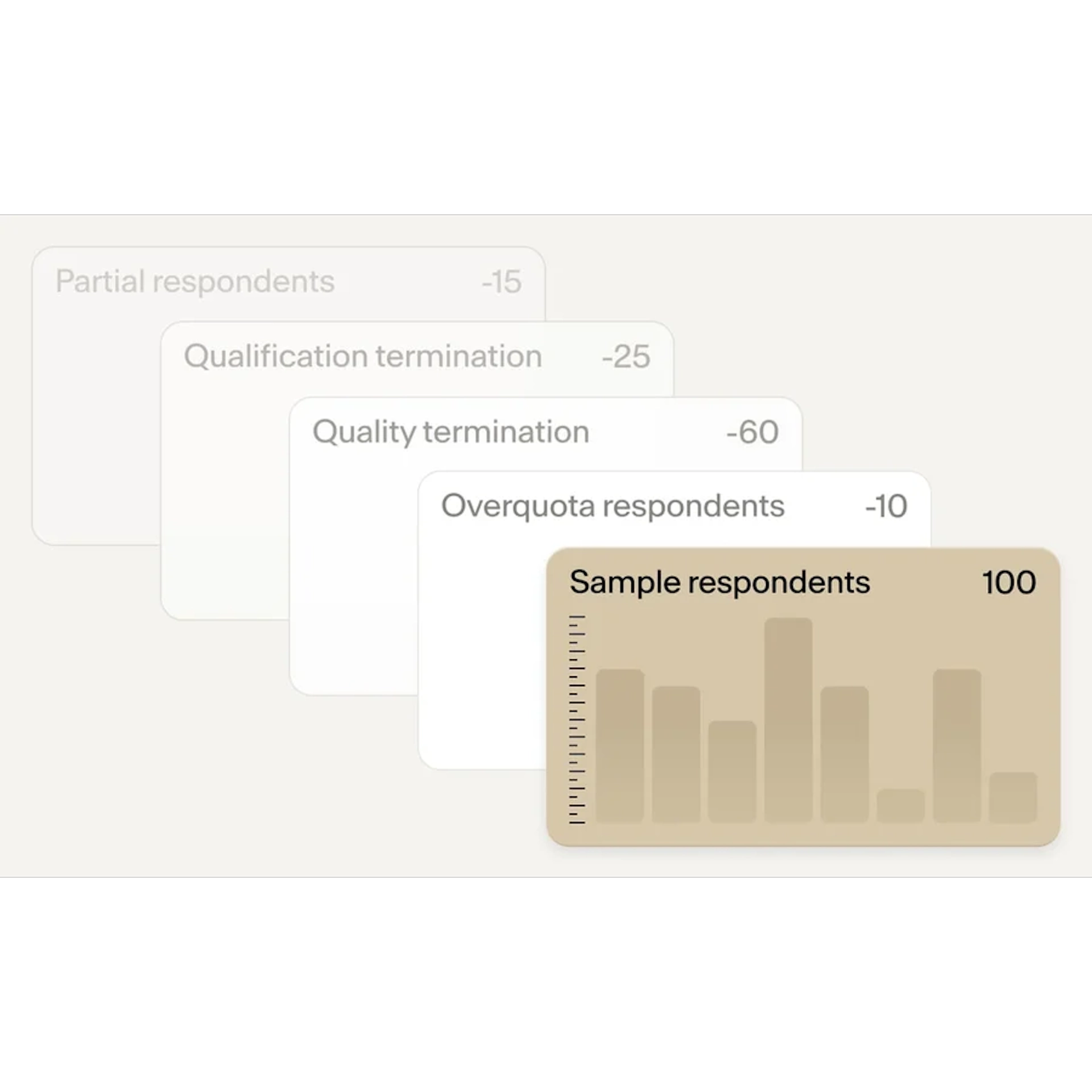

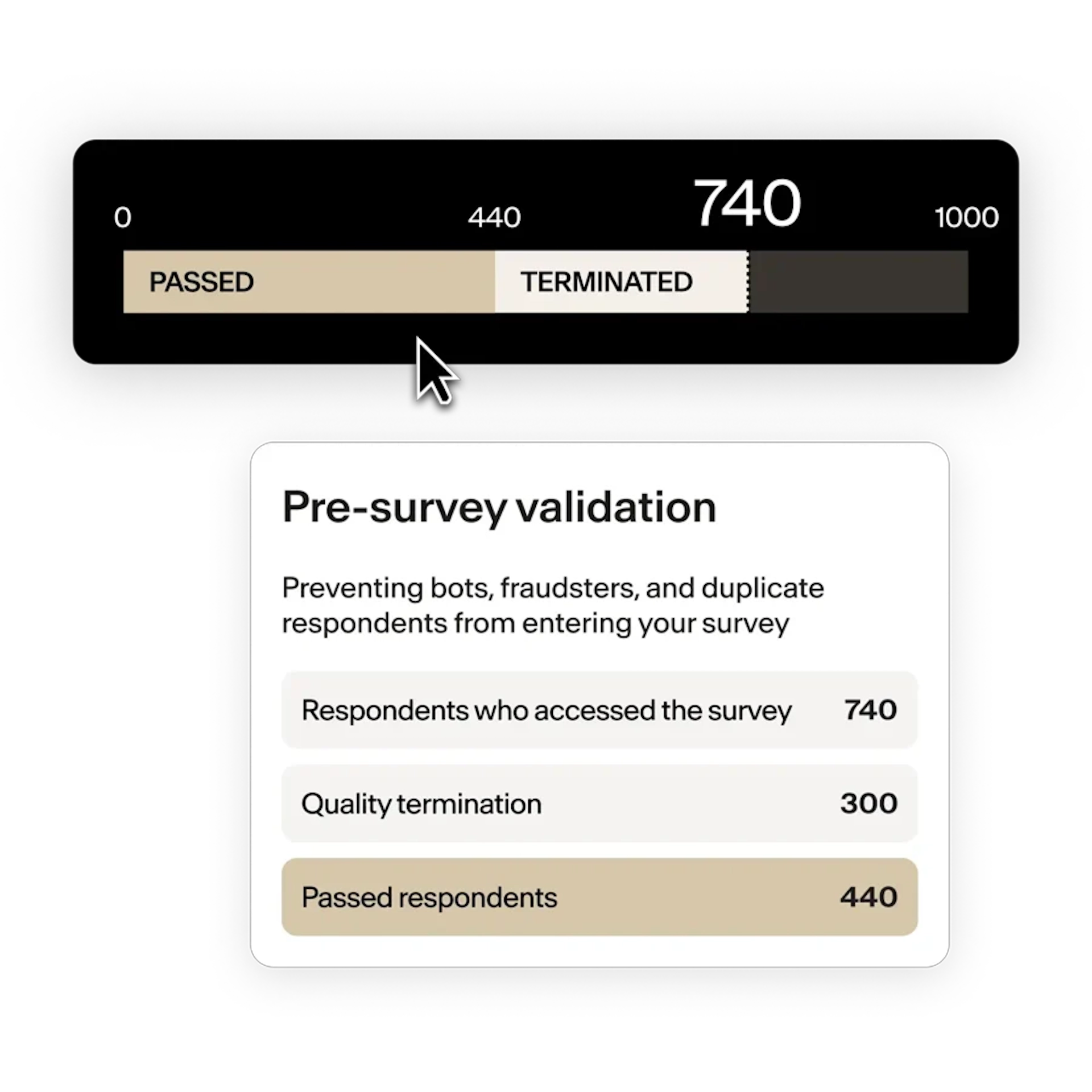

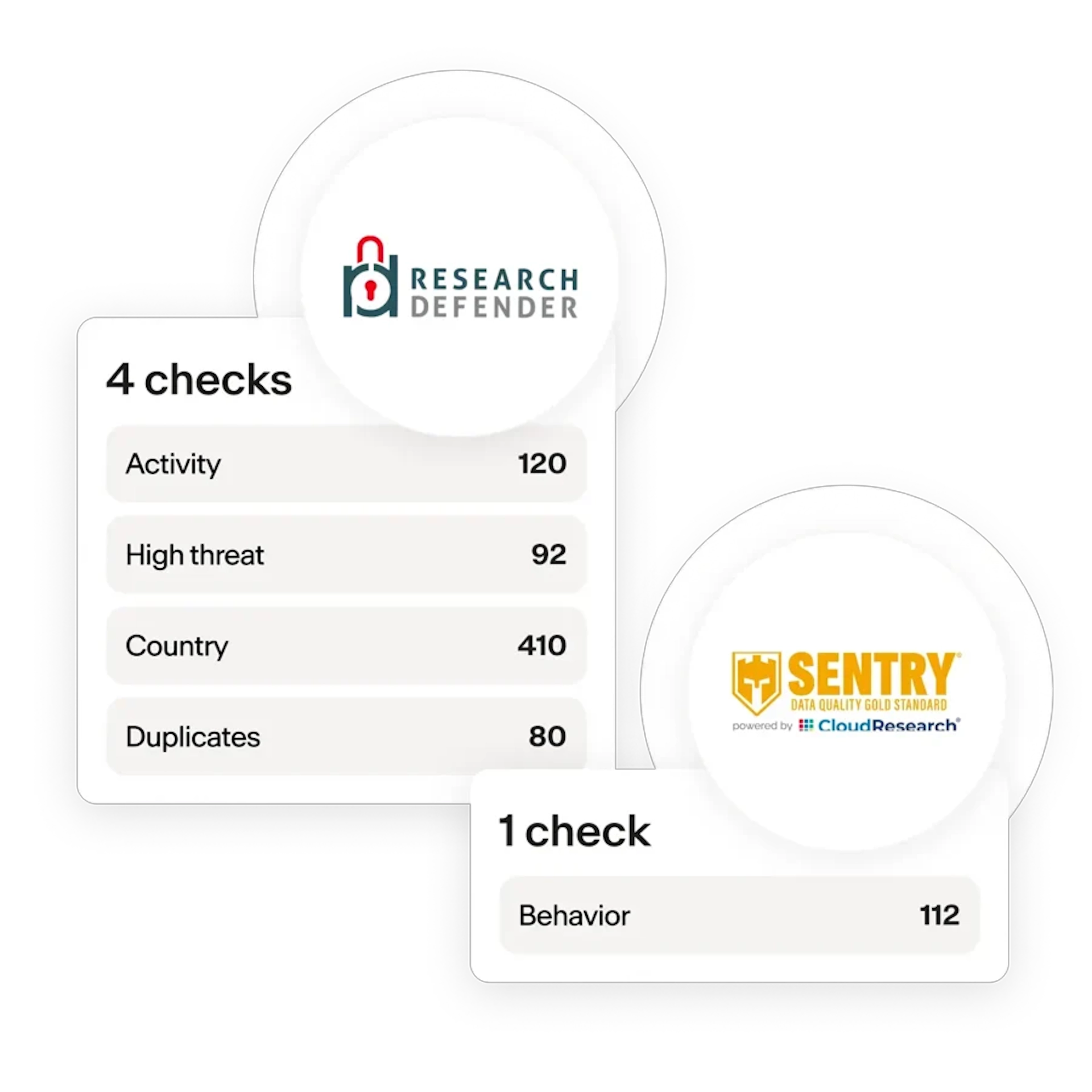

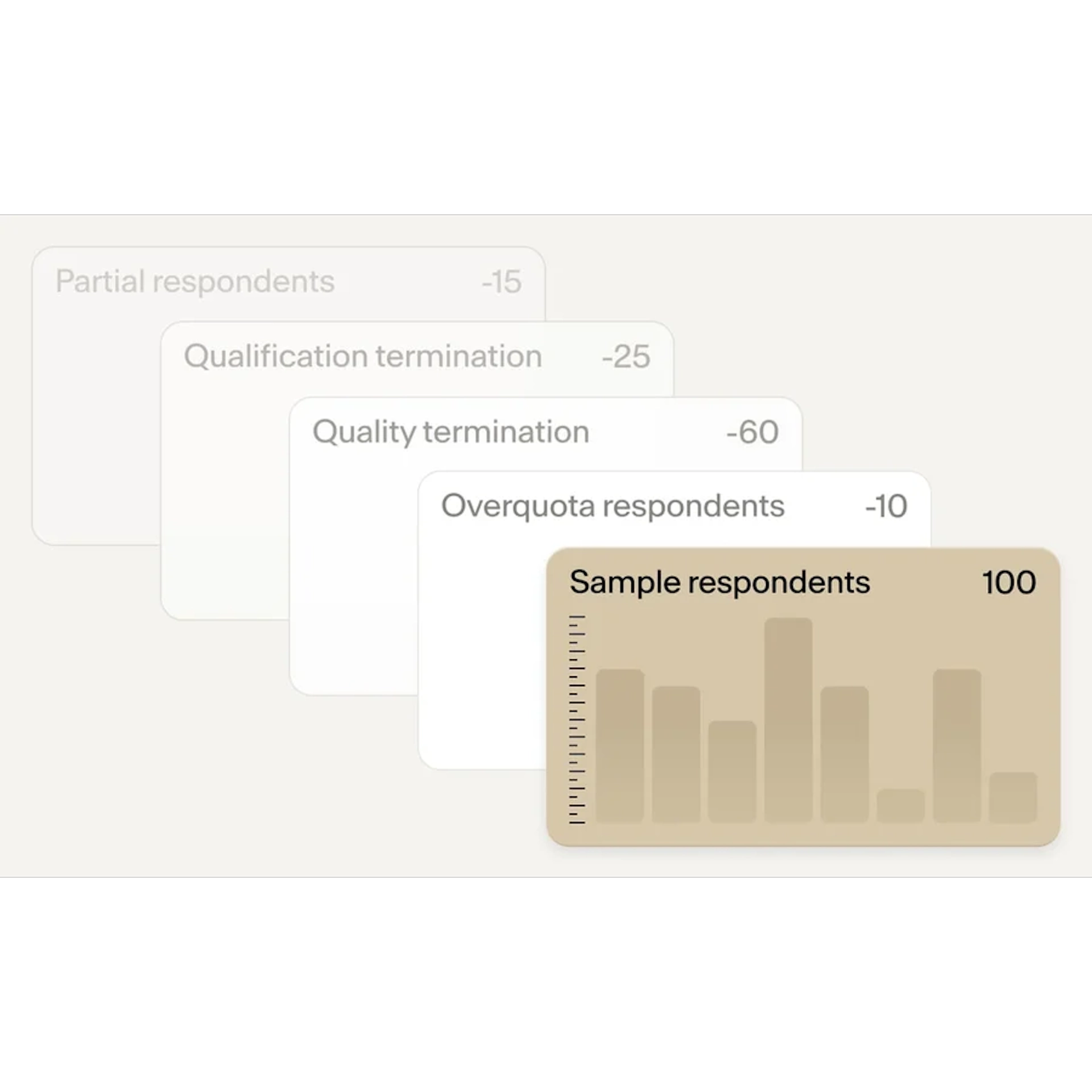

We built a 94% automated data quality system using 14 checks across three phases:

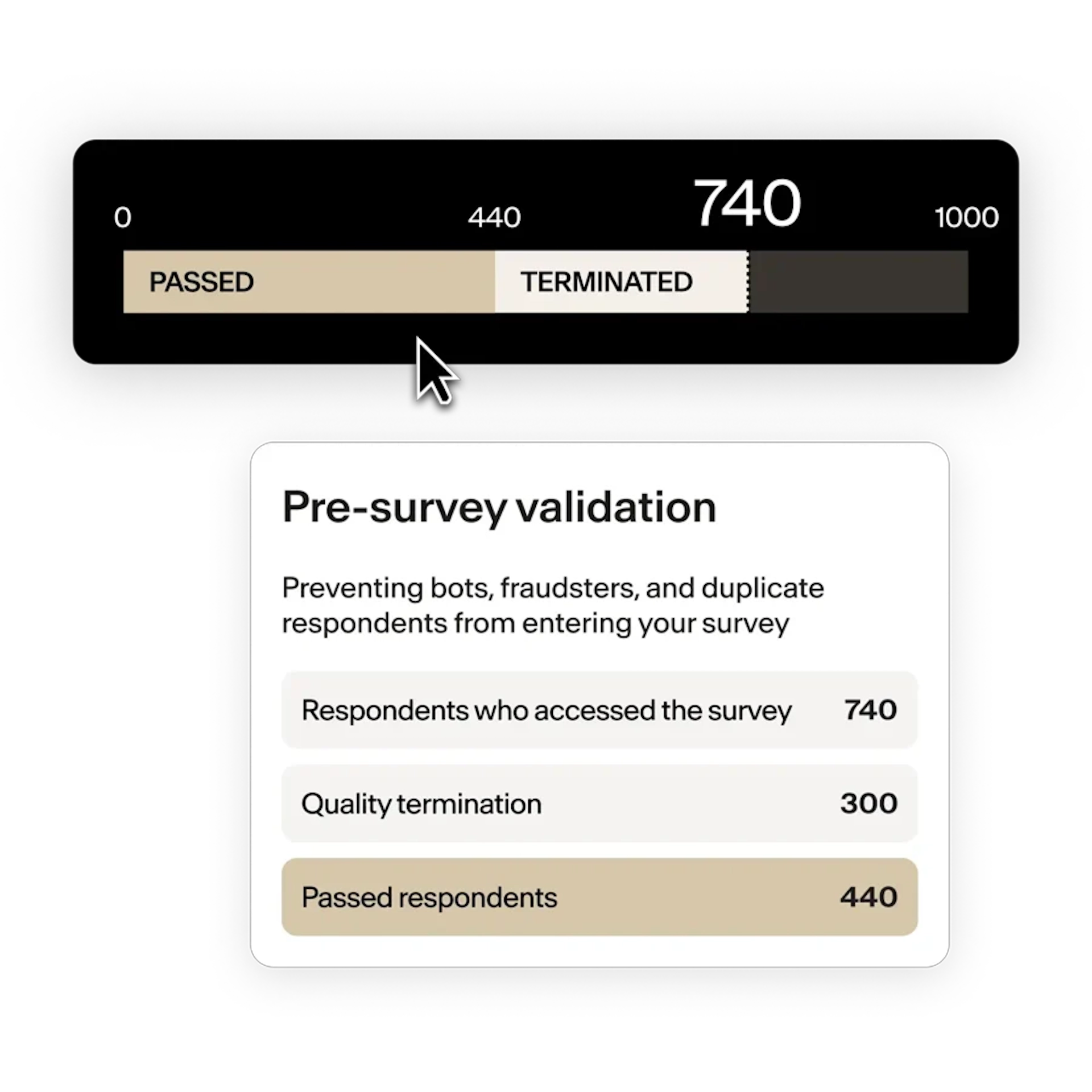

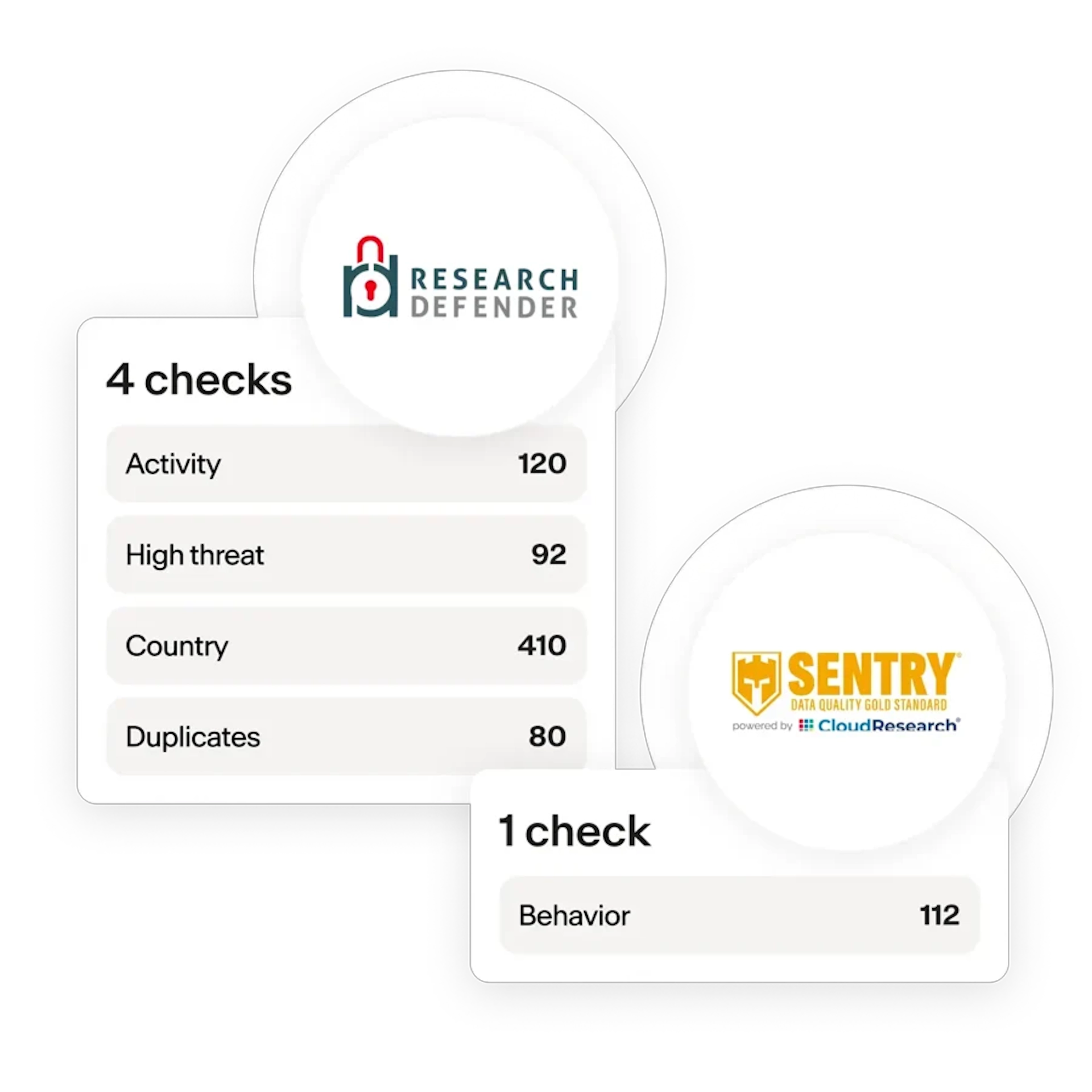

- Pre-survey checks: Validate respondent authenticity and filter out fraudsters and bots.

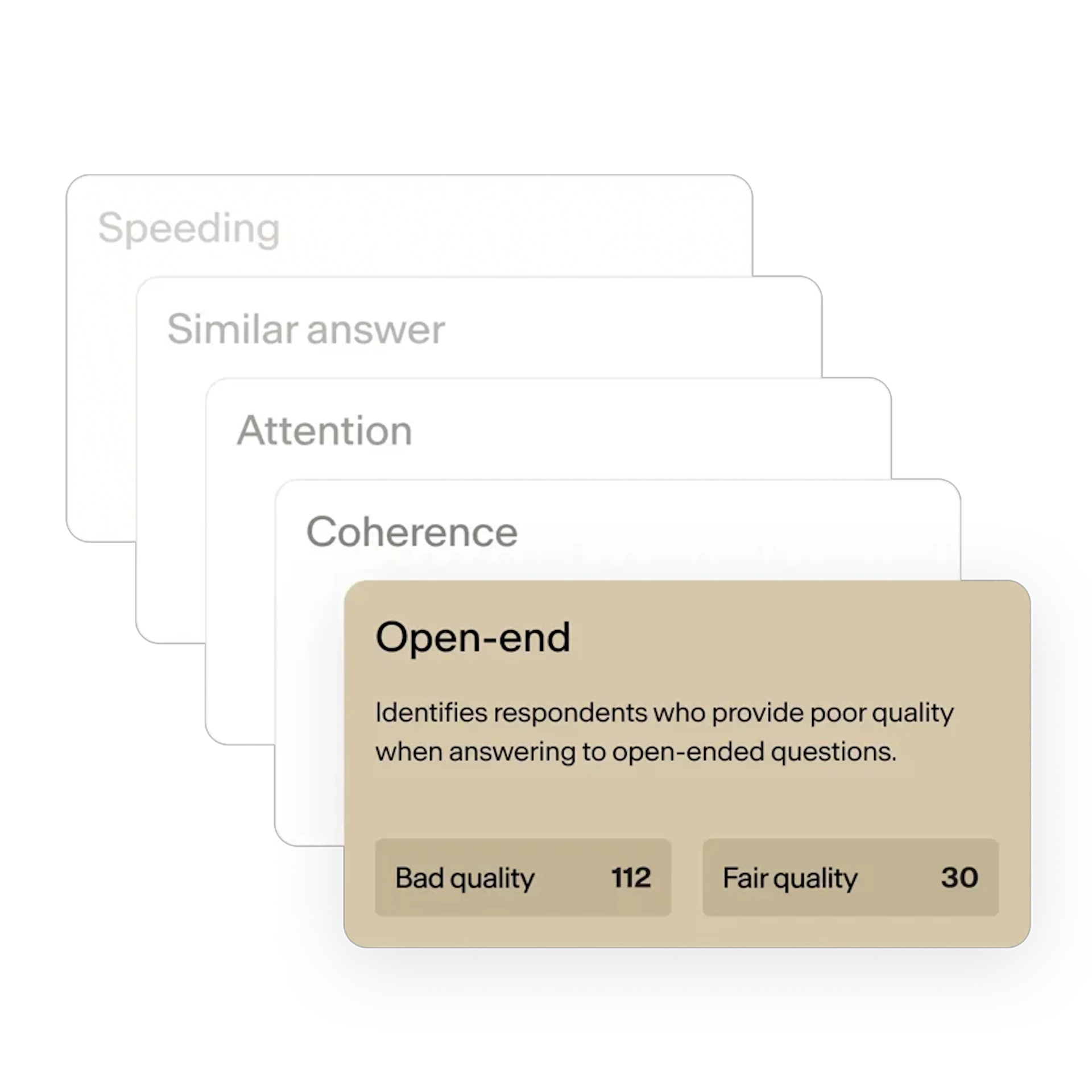

- In-survey checks: Monitor behavior in real time to identify speeding, straightlining, or inconsistent answers.

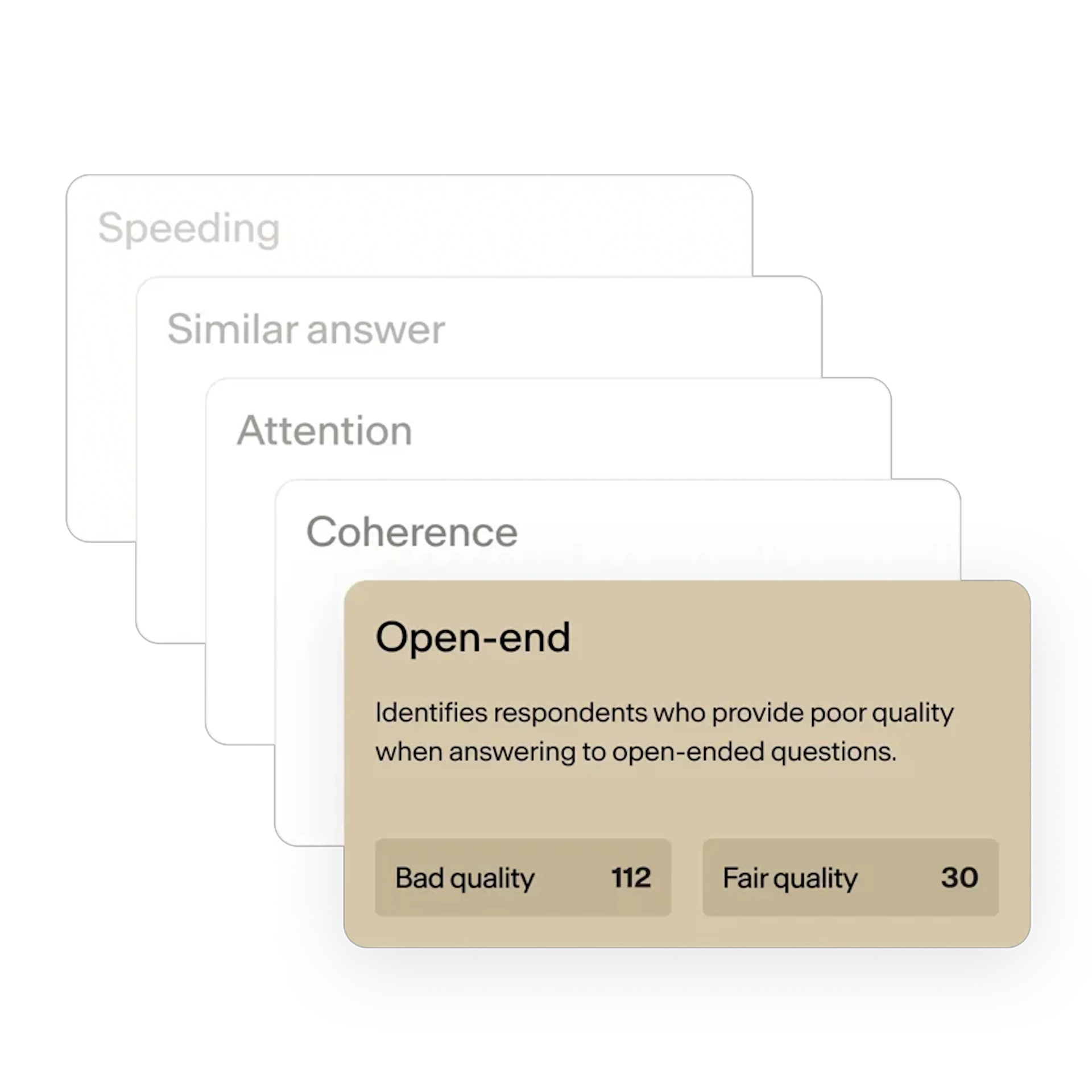

- Post-survey checks: Review open-ended answers for coherence, relevance, and engagement using human and AI analysis.

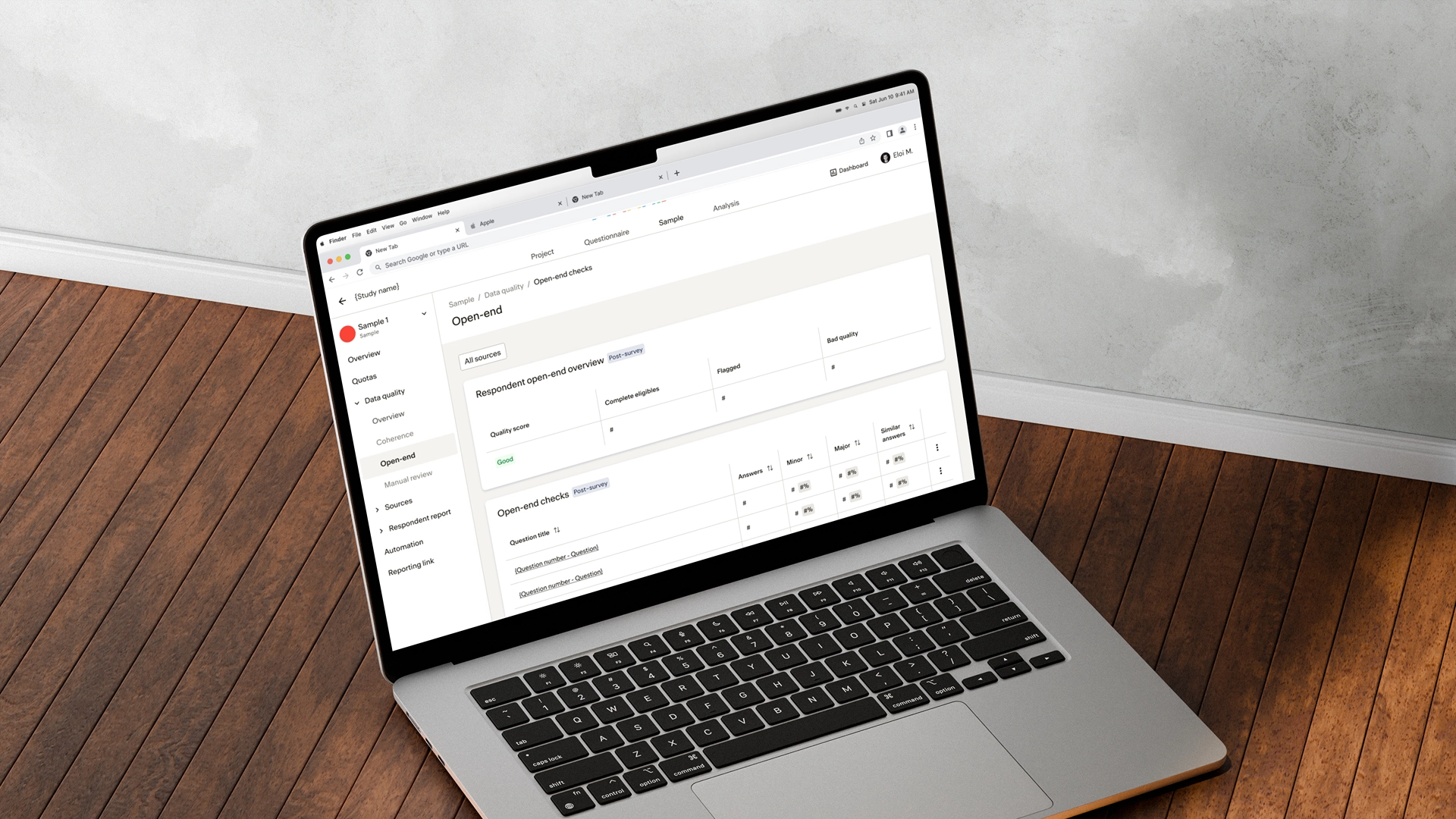

We also built an internal dashboard that provides real-time quality metrics, flags suspicious responses, and enables seamless collaboration. This reduced manual review time by 8 hours per project while improving consistency and accuracy.

Deliverables included:

- Scheme of the full process

- Workshops with the tech & users

- Backlog management

- UI Mockups

- Precise documentation

Results & Added Value

- Reduced manual data review time by 90% through automated quality checks, allowing teams to focus on strategic analysis.

- Users developed greater trust in tech-driven solutions and began proposing ideas for improvement.

- Successful internal solutions can now be offered directly to customers.

Data Quality definition

We define data quality as the collection of reliable and authentic data, achieved when people are honest, attentive, and engaged when taking a survey. This definition is in line with the Global Data Quality (GDQ) Initiative. We also believe that data quality emerges from a combination of three factors:

- The source: Where are the respondents coming from? As we’ll see, not all supply sources provide equally honest, attentive, and engaged respondents—or involve the same acquisition costs and field times.

- The respondent experience (UX): What kind of experience are you putting survey-takers through? Is it quick and smooth, or repetitive and tedious, without sufficient incentives? The more engaged your respondents are, the more effort they’ll put in, especially on open-ended responses.

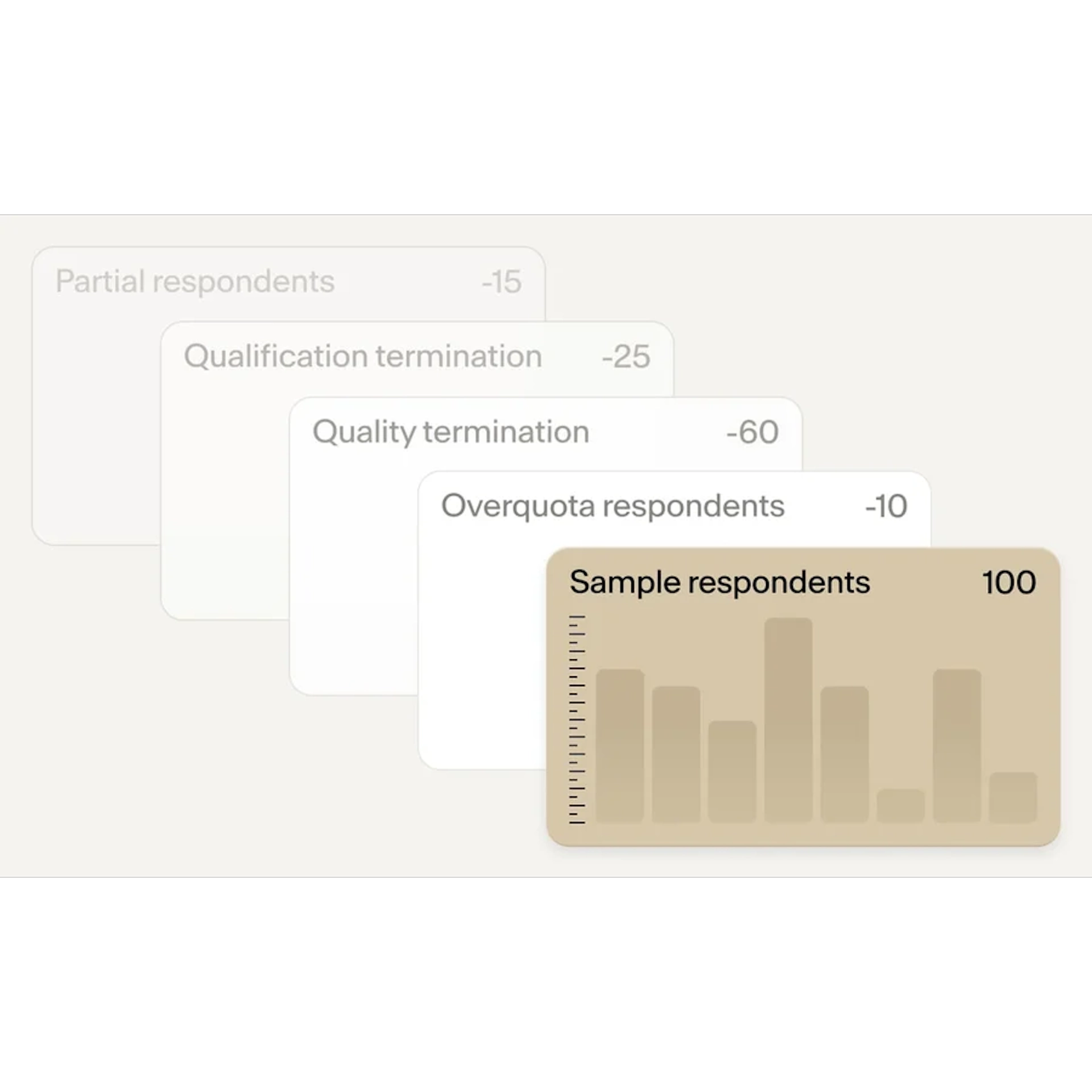

- Data cleaning: What measures are in place to monitor responses and filter out inadequate respondents? While this step is essential, it’s equally crucial to recognize that no amount of data cleaning will remove all fraudulent or suboptimal respondents from a sample.

Risks & Issues workshop

To ensure alignment across teams and build a shared understanding of data quality's business impact, we organized a collaborative workshop bringing together Product, Engineering, Customer Success, Sales, and Research stakeholders. Using FigJam as our remote collaboration tool, we structured an exercise where each team identified and prioritized the top 9 reasons why data quality was critical to their specific area of the business.

Scheme of the full process

Our data cleaning process unfolds across three critical survey phases, adapting seamlessly to any methodology:

- CATI (phone),

- CAWI (web),

- or social media recruitment.

Pre-survey checks authenticate respondents and block bots before they start. In-survey monitoring tracks real-time behavior to catch speeders, straightliners, and inconsistent patterns. Post-survey analysis uses human review and AI to evaluate open-ended responses for coherence and engagement.

This layered approach works universally across interview modes, ensuring consistent quality regardless of how respondents are reached.

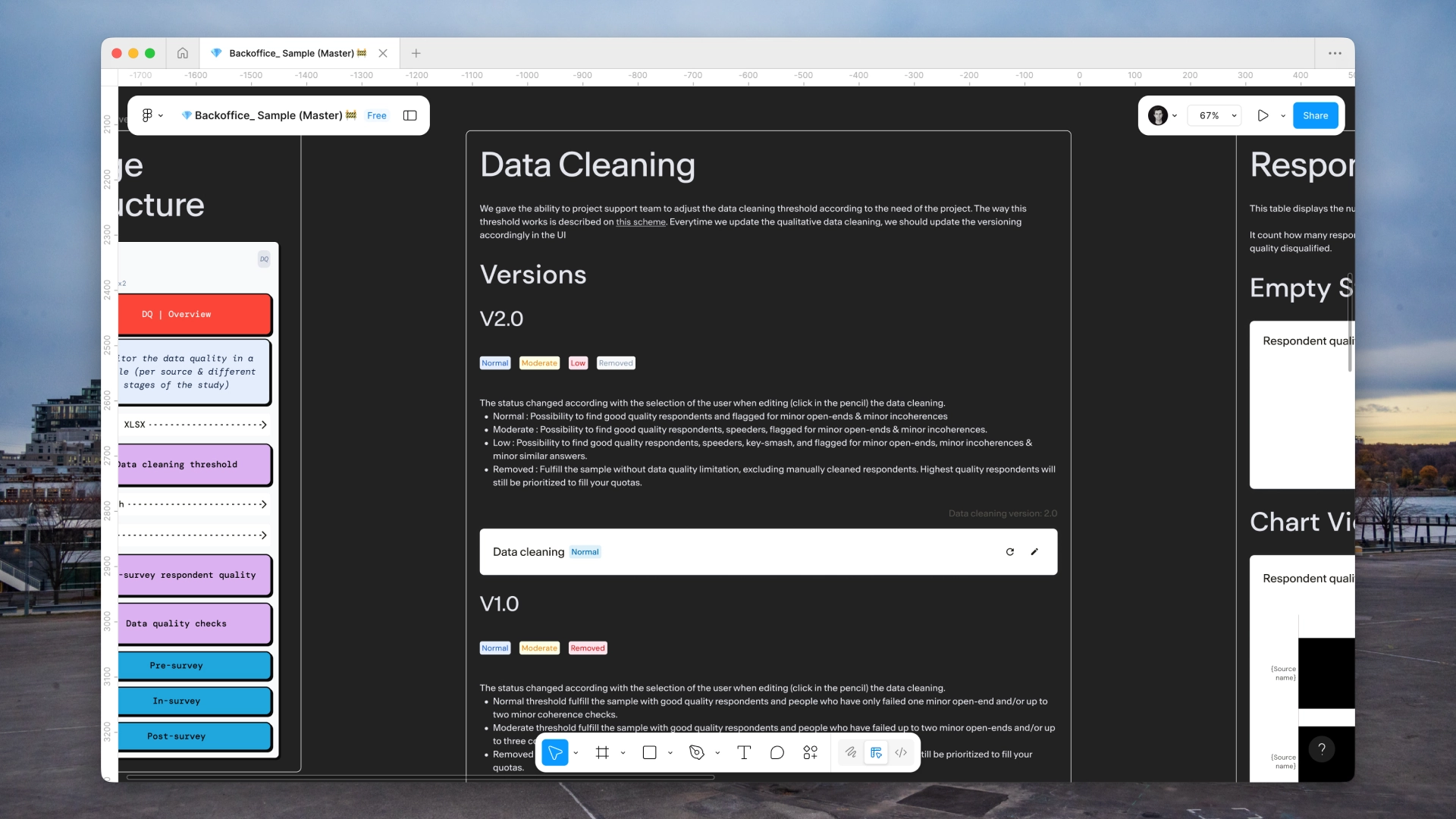

Precise documentation

To ensure clarity and traceability, I created two versions of our data cleaning documentation: one in Figma for designers and developers (enabling version control and technical collaboration), and one in Notion for end users (providing accessible, user-friendly guidance).

This dual approach maintained a clear history of iterations while helping both internal teams and clients understand our quality processes. The documentation became a key resource for onboarding, troubleshooting, and building trust in our methodology.

Leading Product Design for Potloc's Data Quality and Fraud Prevention team taught me that building trust in technology requires clear communication and transparency.

I worked with ML engineers, DesignOps specialists, and data scientists on many tasks: running workshops, managing backlogs, designing interfaces in Figma, collaborating through FigJam, and writing documentation in Notion.

The biggest lesson came from our users. Even though we automated 90% of data review, people didn't immediately trust the technology. They needed to understand how it worked before they could rely on it.

We solved this by being transparent. We documented every quality check, explained our scoring, showed real-time dashboards, and asked for feedback. This turned doubt into collaboration. Users started suggesting improvements and became advocates for the system.

The best solutions aren't just technically sound : they're trustworthy. By being open about our process, we built confidence in a new way of working and helped our teams focus on insights instead of manual review.

Let's work together

We'll begin around a call to understand your expectations and the problem we've to solve.

Eloi Motte

UX & Product Designer

Discover

Other websites